If you're searching for LTX 2.3 vs Wan 2.2, you're not alone. As open-source AI video generation enters its "production-ready" era, choosing between these two leading models has become the most common question in the creator and developer community. This comprehensive comparison will help you decide which model fits your workflow — whether you're building a content platform, producing short films, or prototyping creative concepts.

Want to try LTX 2.3 right now? Try LTX 2.3 online for free on LTX23.org → — no setup required, generate AI video directly in your browser.

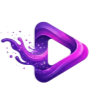

TL;DR — LTX 2.3 vs Wan 2.2 at a Glance

Before diving deep, here's a quick summary table of how LTX 2.3 and Wan 2.2 compare across the key dimensions that matter most:

| Feature | LTX 2.3 | Wan 2.2 |

|---|---|---|

| Developer | Lightricks | Alibaba Tongyi Lab |

| Architecture | DiT (Diffusion Transformer) | 27B MoE (Mixture-of-Experts) |

| Max Resolution | Up to 4K | 480p – 720p (typical) |

| Max Duration | ~20 seconds | ~5 seconds |

| Frame Rate | 24 / 25 / 48 / 50 fps | 24 fps |

| Native Audio | Yes (synchronized audio-video) | No (video only; S2V variant available) |

| Portrait Mode | Native 1080×1920 | Not native |

| Image-to-Video | Improved (less freezing, less Ken Burns) | Strong auto-prompt derivation |

| Inference Speed | ~1.22 s/step on H100; 18× faster than Wan 14B | Moderate; optimized for quality |

| Consumer GPU | Possible with distilled/quantized | 5B Hybrid runs on RTX 4090 at 720p |

| License | Apache 2.0 (free for revenue < $10M) | Open-source (check specific terms) |

| ComfyUI Support | Yes (growing) | Yes (mature ecosystem) |

What Is LTX 2.3?

LTX 2.3 is the latest release from Lightricks, built on a DiT (Diffusion Transformer) architecture. You can try LTX 2.3 online at LTX23.org to experience its capabilities firsthand. It is a single foundation model that generates both synchronized video and audio in one diffusion pass — a unique capability in the open-source landscape.

Key highlights of LTX 2.3 include:

- Rebuilt VAE: Trained on higher-quality data, producing sharper fine textures — hair, text, and edge details are preserved even at 4K.

- 4× larger text connector: Complex prompts with multiple subjects, spatial relationships, and stylistic instructions now resolve much more accurately.

- Native portrait video: Generate vertical 1080×1920 content trained on portrait-orientation data, not cropped from landscape.

- Cleaner audio: Filtered training data and a new vocoder reduce artifacts and improve alignment.

- Stronger Image-to-Video: Less freezing, less "Ken Burns slow pan" artifacts, and better visual consistency from the input frame.

LTX 2.3 supports text-to-video, image-to-video, audio-to-video, video-extend, and retake-video workflows — all within the same model. It offers two generation flows: Fast Flow (prioritizing speed and iteration) and Pro Flow (prioritizing maximum visual quality).

Model checkpoints available: Full dev (bf16), distilled (8 steps, CFG=1), fp8 quantized, spatial upscaler (×1.5, ×2), and temporal upscaler (×2).

Don't want to set up locally? Generate LTX 2.3 videos online at LTX23.org →

What Is Wan 2.2?

Wan 2.2 is developed by Alibaba's Tongyi Lab and built on a 27B Mixture-of-Experts (MoE) diffusion architecture. Instead of routing all computation through a single network, Wan 2.2 uses specialized "experts" — a high-noise expert for structural layout and a low-noise expert for textures, lighting, and fine details.

Key highlights of Wan 2.2 include:

- MoE efficiency: Allocates compute dynamically — focusing on broad structure first, fine details later — boosting quality without proportionally increasing cost.

- Cinematic quality: Excels at complex camera motion, narrative composition, and film-grade lighting that rivals closed-source models.

- Strong prompt fidelity: Among the best at faithfully translating complex natural language instructions into visual output.

- Massive training data: +65.6% image data and +83.2% video data compared to previous versions, dramatically improving generalization.

Wan 2.2 ships in three main variants:

- T2V (Text-to-Video): 480p–720p clips from text prompts

- I2V (Image-to-Video): Animate a single image into video, with optional text guidance

- Hybrid / TI2V-5B: A compact 5B-parameter model for both T2V and I2V at 720p@24fps on consumer GPUs

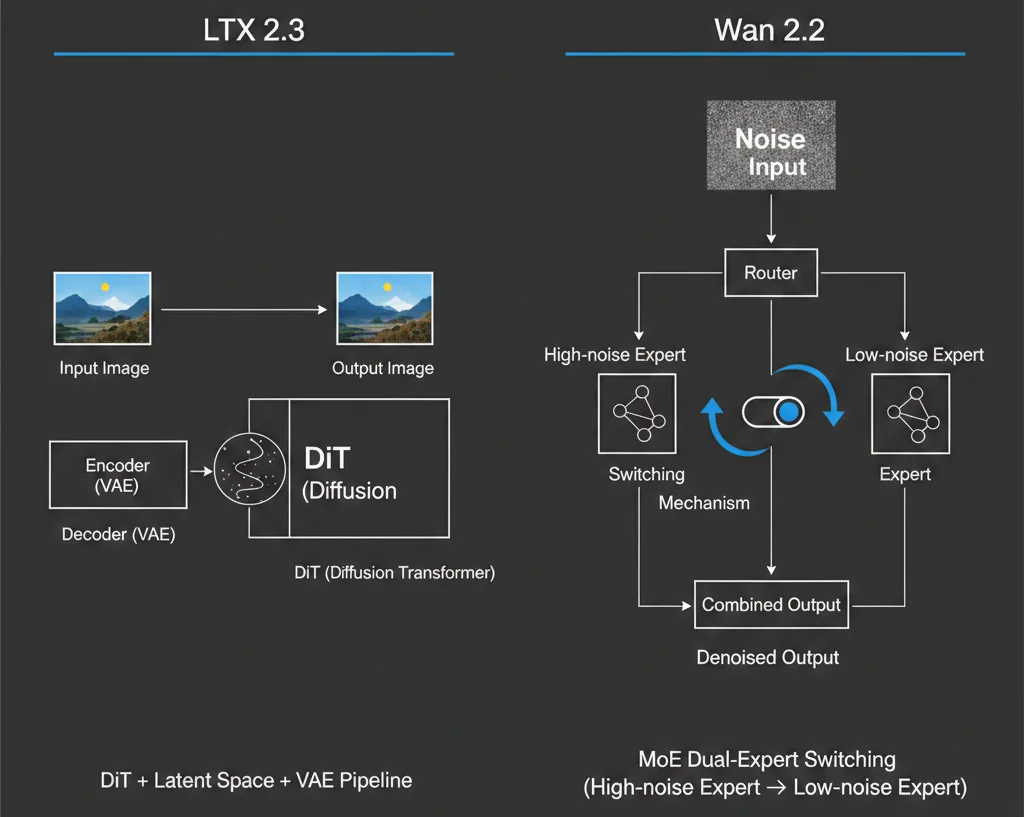

Architecture: DiT vs. MoE — How They Differ

Understanding the architectural difference is key to understanding the LTX 2.3 vs Wan 2.2 trade-offs.

LTX 2.3: Latent Diffusion Transformer

LTX 2.3 operates in a compressed latent space — the model first generates a compact representation of the video, then decodes it back to full resolution via the rebuilt VAE. This approach:

- Makes high-resolution (up to 4K) generation feasible without extreme memory requirements

- Enables faster iteration cycles — ideal for interactive creative tools

- Allows simultaneous audio generation in the same latent pass

The 4× larger gated attention text connector in version 2.3 is a major upgrade: it bridges the language model's understanding and the visual generation much more tightly, so prompts with multiple subjects, timing instructions, and stylistic cues are rendered far more faithfully than in LTX 2.0.

Wan 2.2: Mixture-of-Experts Diffusion

Wan 2.2 uses a 27-billion parameter MoE model that splits the denoising process into specialized experts:

- High-noise expert: Handles the early stages — overall structure, composition, and motion planning

- Low-noise expert: Handles the later stages — textures, lighting, color grading, and fine detail

This division lets the model scale capacity without linearly scaling compute, resulting in:

- Superior motion coherence and complex camera movements

- Better preservation of scene intent across frames

- Especially strong performance on cinematic, narrative-driven scenes

The trade-off: Wan 2.2's approach prioritizes structured, deliberate generation. It tends to be slower per step but delivers more polished results with fewer retries on complex prompts.

Resolution, Duration & Visual Quality

This is where the LTX 2.3 vs Wan 2.2 gap is most visible.

LTX 2.3: 4K, 20 Seconds, Multi-FPS

| Spec | LTX 2.3 |

|---|---|

| Resolutions | 1080p, 1440p, 4K |

| Frame rates | 24 / 25 / 48 / 50 fps |

| Max duration | ~20 seconds per clip |

| Extension | Video-extend endpoint for longer sequences |

| Portrait | Native 1080×1920 (9:16) |

LTX 2.3's rebuilt VAE makes a real difference: fine details like hair strands, on-screen text, small objects, and edge boundaries stay sharp even at 4K — reducing the need for external super-resolution post-processing.

Wan 2.2: 720p, 5 Seconds, Cinematic Focus

| Spec | Wan 2.2 |

|---|---|

| Resolutions | 480p – 720p (primary) |

| Frame rates | 24 fps |

| Max duration | ~5 seconds per clip |

| VAE compression | 16×16×4 (total 64× compression) |

| Consumer model | TI2V-5B at 720p@24fps |

Wan 2.2's strength isn't in raw resolution or duration — it's in how those 5 seconds look. The MoE architecture delivers exceptional motion coherence, cinematic camera movements, and film-grade color and lighting that many creators consider best-in-class among open-source models.

Native Audio: LTX 2.3's Unique Advantage

One of the most significant differentiators in the LTX 2.3 vs Wan 2.2 comparison is native audio generation.

LTX 2.3 generates synchronized audio and video in a single diffusion pass. This means:

- Dialogue lip-syncs with character movement

- Environmental sounds match scene context

- Music and sound effects align with on-screen action

- No post-processing audio alignment needed

This makes LTX 2.3 ideal for:

- Character dialogue and talking-head videos

- Product demos with voiceover

- Short-form content that needs to be publish-ready without a separate audio pipeline

Wan 2.2 generates video only — no native audio output. For audio, you'd need external tools (TTS, music generation, or manual scoring). Wan 2.2 does offer a Speech-to-Video (S2V) variant that takes a static image + speech input to generate lip-synced video, but this is a separate specialized model, not part of the core T2V/I2V pipeline.

Bottom line: If your workflow requires audio-visual content in one step, LTX 2.3 is the clear winner. If you always add audio in post-production anyway, this advantage matters less.

Image-to-Video Quality

Both models support I2V (Image-to-Video), but they approach it differently.

LTX 2.3 made significant improvements in version 2.3 specifically targeting I2V weaknesses:

- Reduced "freezing" artifacts where the image barely moves

- Eliminated the "Ken Burns effect" (slow pan/zoom as a substitute for real motion)

- Better visual consistency — the generated video maintains the style, colors, and details of the input image

Wan 2.2 I2V includes an automatic prompt derivation feature — it can generate video from an image without any text input at all. When combined with text prompts, it produces well-directed results with strong motion coherence. The 14B I2V model excels at preserving the compositional intent of the source image.

Verdict: LTX 2.3 has closed the I2V gap significantly with version 2.3. For motion quality and camera control from a source image, Wan 2.2 still has an edge. For I2V with synchronized audio, LTX 2.3 is unmatched.

Inference Speed & Performance

Speed is a critical factor for production workflows, and here the LTX 2.3 vs Wan 2.2 difference is dramatic.

LTX 2.3: Built for Speed

- ~1.22 seconds per step on H100 — approximately 18× faster than Wan 2.2-14B

- Fast Flow variant sacrifices some detail for even quicker generation, ideal for A/B testing and rapid iteration

- Pro Flow variant maximizes quality while still being significantly faster than competitors

- Latent diffusion design means less compute per frame

Wan 2.2: Built for Quality

- The 27B MoE model is optimized for A100–H200 server GPU clusters

- Longer generation times per clip, but each clip tends to need fewer retries

- TI2V-5B compact model runs on consumer GPUs (RTX 4090) at 720p@24fps

- Community quantized versions (GGUF) further reduce hardware requirements

For interactive platforms (online editors, real-time creative tools): LTX 2.3's speed advantage is decisive.

For batch production (generating a library of cinematic clips overnight): Wan 2.2's per-clip quality may offset longer generation times.

Ecosystem & Tooling Maturity

LTX 2.3 Ecosystem

- LTX23.org: Try LTX 2.3 online — text-to-video, image-to-video, and more, directly in your browser with no setup

- LTX Desktop: A production-ready video editor built on the LTX engine, launched alongside v2.3

- LTX API: Managed API endpoints (

ltx-2-3-fastandltx-2-3-pro) at 720p and 1080p - ComfyUI: Updated custom nodes and reference workflows for T2V, I2V, and multi-stage generation with latent upscaling

- HuggingFace: Multiple checkpoints (dev, distilled, quantized, upscalers) available

- Growing community — ecosystem is rapidly expanding but still maturing

Wan 2.2 Ecosystem

- ComfyUI: Mature workflows, well-documented nodes, extensive community templates

- Cloud platforms: One-click deployment on various GPU cloud providers

- Quantized models: GGUF and other formats for consumer hardware

- Community content: Abundant tutorials, prompt libraries, and style LoRAs

- HuggingFace: Official weights for T2V, I2V, and TI2V-5B variants

- More established ecosystem — easier for beginners to find support and get up to speed

Ecosystem verdict: Wan 2.2 has the more mature and accessible community today. LTX 2.3 is catching up fast and offers a more integrated first-party toolchain (Desktop app + API + ComfyUI). The easiest way to get started with LTX 2.3 is through LTX23.org — zero setup, instant generation.

Prompt Adherence & Motion Quality

This is where subjective preferences play a big role in the LTX 2.3 vs Wan 2.2 choice.

Wan 2.2: The Prompt Fidelity Champion

Multiple independent reviews and community benchmarks consistently rate Wan 2.2 as one of the strongest open-source models for:

- Complex prompt faithfulness: Multi-subject scenes, specific spatial arrangements, and precise action descriptions

- Camera motion control: Dolly, pan, crane, tracking shots rendered with cinematic intent

- Aesthetic coherence: Film-grade lighting, color grading, and compositional balance

Some comparisons have found Wan 2.2 outperforms even newer models (including Wan 2.5 in certain scenarios) on prompt fidelity — a testament to its well-trained MoE architecture.

LTX 2.3: Closing the Gap

LTX 2.3's 4× larger text connector is a direct response to the prompt adherence challenge:

- Complex prompts with multiple subjects and spatial instructions resolve more accurately

- Timing, motion, and expression descriptions translate more faithfully

- The improvement from LTX 2.0 → 2.3 is described as "generational" by the dev team

However, for the most demanding cinematic prompts — especially those involving complex coordinated camera and subject motion — Wan 2.2 still holds an edge according to community feedback.

Licensing & Commercial Use

LTX 2.3

- License: Apache 2.0 for companies with annual revenue under $10M

- Commercial licensing: Available for larger enterprises via Lightricks' licensing program

- Derivatives: Full model is trainable; LoRA training takes less than an hour in many settings

- Very business-friendly for startups and indie developers

Wan 2.2

- License: Open-source/open-weights, available on HuggingFace

- Commercial use: Generally permitted, but specific terms should be reviewed against Alibaba's latest policies

- Community derivatives: Widely used for internal testing, concept generation, and some user-facing creative tools

For startups and small businesses, LTX 2.3's clear Apache 2.0 license with explicit commercial terms may be more reassuring from a legal compliance standpoint.

When to Choose LTX 2.3

LTX 2.3 is the better fit when your workflow requires:

- High resolution (1080p–4K) output for final production

- Longer clips (~20 seconds) without stitching

- Native audio-video sync — dialogue, sound effects, or ambient audio generated alongside video

- Fast iteration — interactive tools, A/B testing, or rapid prototyping

- Portrait/vertical video (9:16) — natively trained, not cropped

- Clear commercial licensing — Apache 2.0 for companies under $10M

Ideal use cases: Short dramas, ad intros, character dialogue videos, TikTok/Reels content creation platforms, online video editors, voice-over product demos.

Ready to try? Generate your first LTX 2.3 video now on LTX23.org →

When to Choose Wan 2.2

Wan 2.2 is the better fit when your workflow requires:

- Cinematic camera motion and composition — film-grade visual quality in 5-second clips

- Maximum prompt fidelity — complex multi-element scenes that must match your description precisely

- Existing ComfyUI pipeline — leverage mature ecosystem, LoRAs, and community workflows

- Consumer GPU deployment — the 5B Hybrid model runs well on RTX 4090

- Silent video drafts — when audio will be added in post-production

Ideal use cases: Film storyboards, product lifestyle videos, cinematic concept art, VFX previsualization, ComfyUI-based creative pipelines.

Can You Use Both?

Absolutely. Many production teams are finding that LTX 2.3 and Wan 2.2 are complementary rather than competitive:

- Use Wan 2.2 to generate hero shots, cinematic intros, and high-fidelity concept clips that require perfect composition and camera work

- Use LTX 2.3 for the bulk of content production — dialogue scenes, behind-the-scenes clips, vertical social media cuts, and anything requiring native audio

Both models integrate with ComfyUI, so you can build workflows that route different types of shots to different models based on requirements. You can start experimenting with LTX 2.3 right away at LTX23.org.

The Bigger Picture: Why This Comparison Matters

The LTX 2.3 vs Wan 2.2 comparison reflects a maturation of the open-source AI video generation space. We've moved past the question of "is open-source video generation viable?" to "which open-source model best fits my specific production needs?"

Key trends driving this search:

- LTX 2.3's March 2026 release put a new contender on the table with unique audio capabilities

- Wan 2.2's proven track record as the quality benchmark for open-source video generation

- Growing demand for locally-deployable, commercially-licensable video generation models

- ComfyUI ecosystem enabling easy side-by-side testing and hybrid workflows

Both models continue to evolve rapidly. LTX has shown aggressive iteration speed (from 2.0 to 2.3 in a short period), while Wan's team has demonstrated depth of quality (the MoE approach delivering near-closed-source aesthetic results).

Frequently Asked Questions

Is LTX 2.3 better than Wan 2.2?

Neither model is universally "better." LTX 2.3 excels at high-resolution output, native audio, speed, and portrait video. Wan 2.2 excels at cinematic quality, prompt fidelity, and motion coherence. The best choice depends on your specific workflow and output requirements.

Can I run LTX 2.3 or Wan 2.2 on my local GPU?

Yes, both offer options for local deployment. LTX 2.3 provides distilled and fp8 quantized checkpoints. Wan 2.2 offers the TI2V-5B compact model and community GGUF quantizations. An RTX 4090 can run either model's smaller variants.

Does Wan 2.2 support audio generation?

The base T2V and I2V models of Wan 2.2 generate video only. A specialized Speech-to-Video (S2V) variant can generate lip-synced video from a static image + audio input. For general audio-video co-generation, LTX 2.3 is the more integrated solution.

What resolution does LTX 2.3 support?

LTX 2.3 supports 1080p, 1440p, and 4K at 24/25/48/50 fps, including native portrait mode (1080×1920). It can generate up to ~20 seconds per clip.

Is Wan 2.2 free for commercial use?

Wan 2.2 is released as an open-source model. While widely used commercially, you should review the specific license terms from Alibaba for your use case. LTX 2.3 offers a clearer commercial licensing structure with its Apache 2.0 license (free for companies under $10M annual revenue). You can try LTX 2.3 at LTX23.org to evaluate it for your project.

Which model has better ComfyUI support?

Both have strong ComfyUI integration. Wan 2.2 has a more mature community with more workflows, LoRAs, and tutorials available. LTX 2.3 ships updated official ComfyUI nodes and reference workflows, with the ecosystem growing rapidly.

Try LTX 2.3 Online — Free & Instant

Don't just read about it — experience the difference yourself. LTX23.org lets you generate LTX 2.3 videos directly in your browser:

- Text-to-Video: Describe your scene, get a video with synchronized audio

- Image-to-Video: Upload an image, watch it come to life

- No GPU required: Everything runs in the cloud — works on any device

- Free to start: Try it now, no credit card needed

→ Start generating with LTX 2.3 on LTX23.org

Last updated: March 2026. As both models continue to evolve, we'll keep this comparison current with the latest developments.